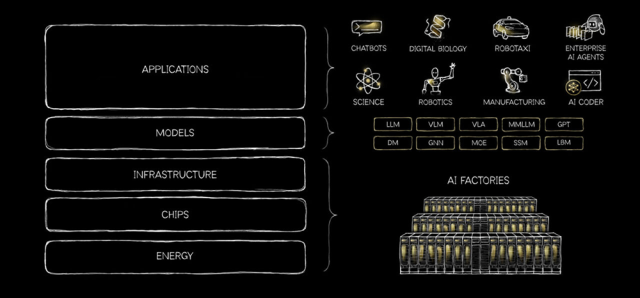

NVIDIA’s annual developer conference (San Jose, March 16–19) has become a bellwether for data center physical infrastructure (DCPI). This year was no exception. NVIDIA DSX took center stage — a full-stack platform for designing, building, and operating AI factories that now counts over 200 partners in its ecosystem. Several major DCPI vendors—including ABB, Eaton, Mitsubishi Electric, Schneider Electric, Siemens, Trane Technologies, and Vertiv—unveiled co-designed solutions in a tightly choreographed wave of announcements. It was a concrete expression of what CEO Jensen Huang declared in his keynote: “this conference is going to cover every single layer of the five-layer cake of artificial intelligence, from land, power and shell the infrastructure to chips, to the platforms, the models, and, of course, the most important, and ultimately what’s going to take get this industry taken off, is all of the applications.”

A Factory for Designing Factories

Among the DSX components, what particularly stood out was the Omniverse DSX Blueprint—a now generally available platform for modeling data center layouts, power topologies, and thermal behavior, using simulation-ready 3D models contributed by infrastructure partners in OpenUSD format. It is an ambitious vision at a time when the reality on the ground is that most data center design still relies on traditional CAD and BIM applications, and digital twin adoption is still in its infancy. This is NVIDIA being characteristically visionary—anticipating what will eventually become a necessity, even if today it can look like an overkill.

The industry is moving from adding capacity in the teens of gigawatts a year to potentially 100GW+ in a decade or less. At that scale, without AI-assisted tools in design, construction, and commissioning, it is hard to see how projects come online at the pace required—particularly given well-known skilled labor shortages. Just as semiconductor design has become fundamentally dependent on AI tools, data center design at gigawatt scale may have no choice but to follow the same path. The Omniverse Blueprint is NVIDIA’s bet on removing the barriers to building AI factories at scale.

But while the Omniverse Blueprint captures the imagination, the conversations dominating the show floor among DCPI vendors were far more immediate. Five topics in particular stood out: the growing heterogeneity of inferencing cluster racks, the fast-approaching 800 VDC transition, the ramp-up of liquid cooling designs, the potential commoditization within the MGX ecosystem and—as no data center discussion could miss it—power availability.

The End of the One-Rack Era

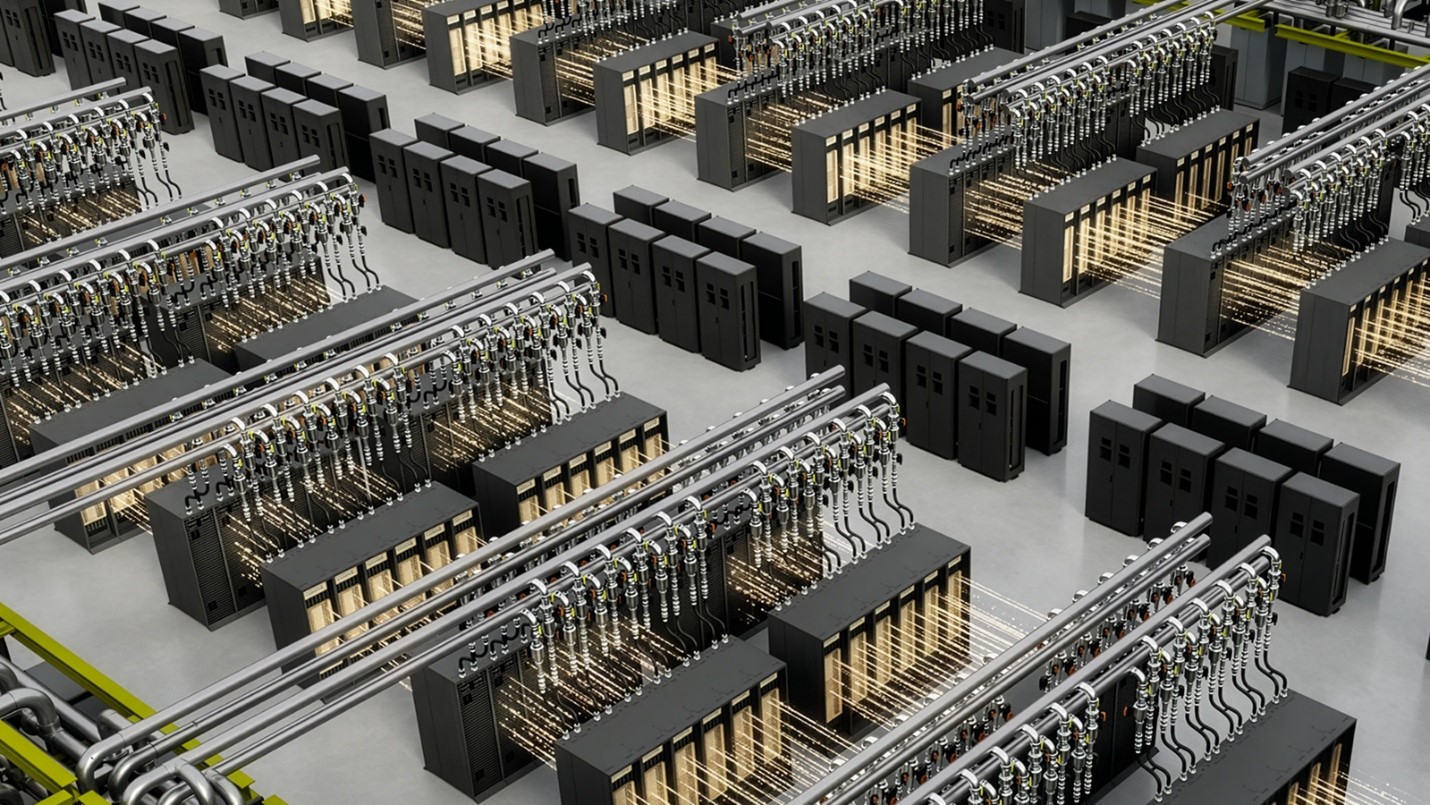

For the past two NVIDIA generations, data center designers could plan around a single workhorse rack. The Hopper and then Blackwell platforms offered a largely homogeneous building block: one compute rack architecture, scaled across rows and halls, with relatively uniform power and cooling profiles. GTC 2026 broke that pattern decisively.

NVIDIA introduced not one but a number of rack configurations under the Vera Rubin umbrella. The NVL72 remains the flagship—72 Rubin GPUs and 36 Vera CPUs in a fully liquid-cooled, fanless, cableless enclosure exceeding 200 kW per rack. Alongside it, a CPX rack adds Rubin CPX accelerators to the Vera Rubin superchip trays, optimized for inference performance. A Vera CPU-only rack targets inference and data preprocessing without GPU acceleration. And the LPX rack with Groq’s LPUs debuts third-party silicon within NVIDIA’s own reference design.

This is a big departure. And it is also entirely expected. A single architecture serving every workload was only tenable while AI infrastructure was synonymous with large-scale training. As workloads diversify into a variety of fine-tuning and inferencing agentic AI applications, infrastructure must follow suit. Henry Ford was able to offer the Model T alone for only so long.

For DCPI vendors, the implications are immediate. Heterogeneous clusters mean managing mixed rack densities, uneven heat loads, and varying liquid cooling requirements coexisting on the same row. This is a design and operational challenge that will demand far more flexibility from infrastructure solutions than the relatively uniform AI halls of the Hopper and Blackwell era.

High Voltage, High Stakes

For the biggest disruption in data center power architecture in decades, 800 VDC power distribution received remarkably little attention in NVIDIA’s official channels. Absent from Jensen’s keynote and with no significant announcements since the technical blog and whitepaper released alongside last year’s OCP Global Summit—an event we covered in a previous blog—NVIDIA’s messaging on the architecture has been sparse.

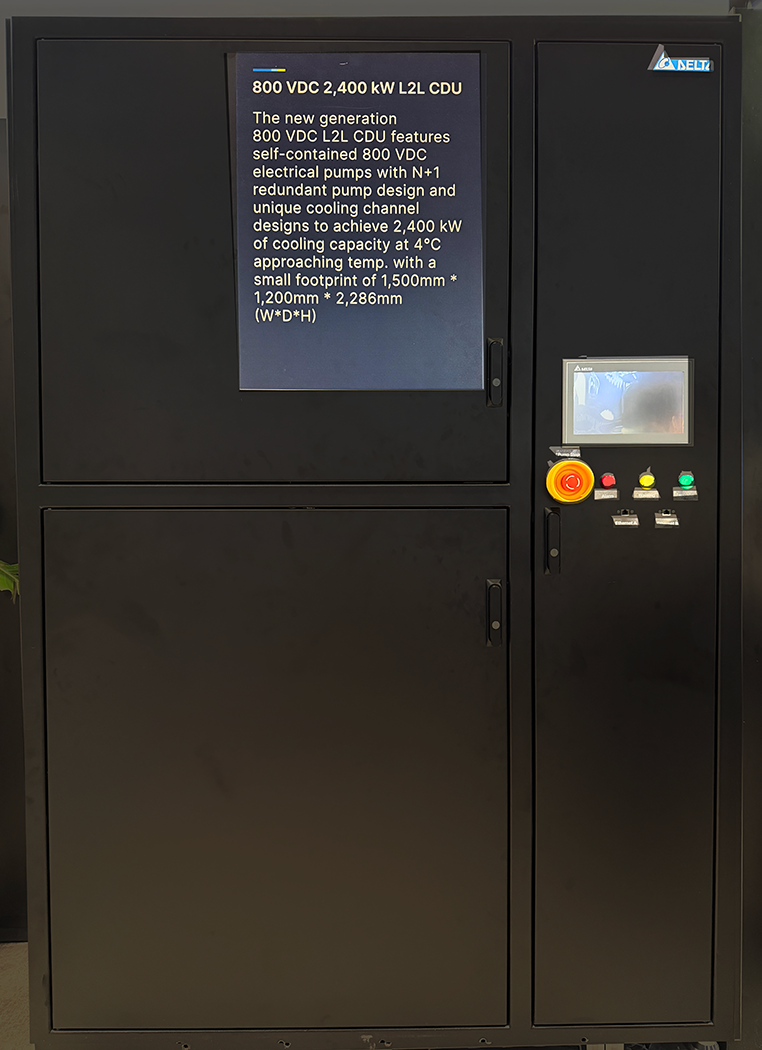

The relevance of the discussion among vendors, however, could not have been more different. 800 VDC was the talk of the town. Multiple vendors showcased equipment and prototypes, and many dedicated sessions explored everything from semiconductor building blocks to rack-level power delivery and facility integration. Vendors like Delta Electronics, Texas Instruments, and STMicroelectronics focused their marquee March 16 announcements squarely on 800 VDC developments—an unusual departure from the lockstep of similar-themed announcements that have become the norm at GTC.

Such advancements are important and necessary, but many pieces of the 800 VDC topology remain unanswered. In his GTC session entitled “A Safe, Efficient, and Scalable Approach to 800 VDC Architecture,” Eaton’s J.P. Buzzell referenced an OCP white paper expected in the coming weeks. The draft should bring more clarity to the architecture, but there is still a long way to go before engineers can fully specify an 800 VDC data hall. And even once the specification matures, supply chains for components will need to be stood up and safety guidelines codified before broad deployment can begin.

45 Degrees of Separation

Much like 800 VDC, another infrastructure shift that made waves in an earlier NVIDIA keynote received little airtime at GTC. At CES in January, Jensen highlighted the move toward 45°C warm-water inlet temperatures—a significant departure from the designs more commonly deployed today. Beyond Jensen’s brief nod to Vera Rubin’s 45°C specification, the topic received little attention at GTC.

NVIDIA remains committed to 45°C, but there is no sign of it doubling down or rushing to get there. The convergence toward 45°C architectures will take longer to play out. Facility-side infrastructure needs to be adapted, but operators might remain reluctant to optimize the cooling system if doing so carries any risk of reducing accelerator performance. In an age of highly constrained compute, every token counts. And the imperative to maximize throughput trumps facility-level efficiency optimization.

The water temperature debate, however, was far from the only liquid cooling story at GTC. On the show floor, the direction of travel for CDU capacity was unmistakable. As pod architectures scale and per-rack thermal loads climb, vendors responded with a new class of multi-megawatt CDUs. These are a step change from capacities that dominated the market just a year ago, and we expect this upward trend to continue as next-generation pod architectures push thermal envelopes further still.

An interesting product found on the exhibition floor was a direct-current CDU, able to be connected straight to the 800 VDC bus. It is a thoughtful choice that adds flexibility for operators designing next-generation whitespace, even if we expect most large units to be housed in mechanical galleries in the grey space—where traditional AC power distribution is likely to remain the standard for the foreseeable future. Either way, the convergence of power and cooling design choices is becoming impossible to ignore.

MGX and the March Toward Standardization

The growing specificity of NVIDIA’s reference architectures—from rack dimensions and cooling requirements to power topologies and simulation-ready digital models—raises an uncomfortable question for DCPI vendors: as NVIDIA defines more of the design, what room is left for differentiation?

The “MGX wall” on the show floor—displaying components from dozens of vendors side by side within the standardized MGX ecosystem—made this tension visible. By standardizing interfaces, form factors, and performance specifications across the infrastructure stack, MGX makes it easier for operators to mix and match components from multiple suppliers. That is a win for deployment speed and supply chain resilience. But it also compresses the space in which vendors can compete on anything other than price and availability—the classic hallmarks of a commoditizing market.

Not all vendors will be affected equally. Those with deep system integration expertise, intelligent controls, service capabilities, or engineering and quality differentiation in mission-critical components will find ways to stay above the commoditization line. But for vendors whose value proposition rests primarily on the physical product itself, the tightening of NVIDIA’s specifications around their equipment is a trend worth watching closely.

Unlocking the Grid

Perhaps the most consequential launch at GTC came not from the chip announcements but from DSX Flex—NVIDIA’s software layer for connecting AI factories to grid services and orchestrating dynamic power adjustment. With NVIDIA’s order book continuing to grow, the math is simple: the gap between the power needed to energize forecast chip shipments and the pace of grid updates is too large to ignore. And the only near-term path to more power is not launching data centers into space, but tapping into existing grid capacity when it is not being used.

This was a point I raised directly with Jensen during the event. His response was unequivocal: data centers must change their relationship with the grid and be willing to accept less stringent SLAs in exchange for faster access to capacity. AI workloads will need to flex around supply constraints rather than demanding always-on, fully firm power. In a world where tokens per watt is becoming the defining metric for AI factory economics, accessing these watts and maximizing them becomes a dealbreaker. Startups like Emerald AI and Phaidra are building the technology to support this, but unlocking it at scale requires more than just engineering ingenuity. It depends on the willpower and aligned incentives of primary gatekeepers involved—utilities, grid operators, and their regulators.

What This Means for the DCPI Market

Dell’Oro Group’s latest DCPI market update, released during GTC week, showed the market reached $10.9 billion in 4Q 2025—up 20% year-over-year—with synchronized backlog surges across vendors in power and cooling. The AI supercycle continues to drive record investment, and GTC 2026 did nothing to dampen expectations. The tone was one of confident optimism—about the trajectory of AI, the scale of compute still to be built, and the opportunities ahead for data center vendors.

Regardless of whether that optimism proves fully warranted, GTC 2026 left little doubt: the DCPI market is entering its most consequential chapter yet. Stay tuned as we continue to track these shifts—and connect with us at Dell’Oro Group to discuss these trends as they unfold.

Vendor Press Releases

Accelsius:

Delta Electronics:

- Delta’s Power, Cooling and Microgrid Solutions Showcased at NVIDIA GTC to Bolster the 800 VDC Architecture of Next-gen AI Factories

- Delta Ushers a New Era of AI Digital Twins based on NVIDIA Omniverse at NVIDIA GTC with Real Applications for its Building Automation and Smart Manufacturing Solutions

Eaton:

Foxconn:

Flex:

- Flex Accelerates AI Factory Deployment with NVIDIA Omniverse DSX Reference Designs

- Flex Launches 800 VDC Power Rack for Next-Generation NVIDIA AI Infrastructure

Hitachi:

LiteOn:

Schneider Electric:

STMicroelectronics:

Texas Instruments:

Trane Technologies:

Vertiv: