Nokia Emphasizes Cloud Native and AI at Its Core User Group, May 18th – 21st 2026, in Munich

Kal De, SVP at Nokia, effectively captured mobile operators’ AI angst by including a Lewis Carol quote in the opening keynote of Nokia’s 32nd Core User Group (CUG): “…it takes all the running you can do to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that!”.

This year’s Munich event was the largest ever gathering of Nokia’s core customers, with 500 people taking time from their frenetic work pace to hear Nokia’s latest core product announcements—and drink some world-renowned beer.

One operator was refreshingly honest, indicating, “we have no choice but to move to 5G SA…the whole industry is going cloud native.”

Off the record, all telco vendors (not just Nokia) lament the long time to adoption of 5G Standalone by operators outside China. Meanwhile, mobile operators complain that 5G has not delivered the monetization opportunities it promised at the outset.

At CUG2026, Nokia’s executives aimed to bridge the gap between a pie-in-the-sky core network vision and today’s operator reality. And in that, the conference lived up to its objective, walking a tight line between aspirational presentations of AI-fueled autonomous frameworks and demos of available (or nearly available) solutions to help mobile operators save, or even make, money.

Both on stage and in private conversations, operators explained why they are adopting 5G SA; “we have no choice… the whole industry is moving cloud native”. If that’s not a resounding endorsement, at least it’s a refreshing bout of honesty. One large telco explained that the adoption of cloud native meant shifting the majority of the operator’s effort from developing new services to maintaining the complex network. Clearly there’s an opportunity for the industry to do better.

Ideas for new revenue streams ranged from AI-enabled voice to exposing APIs, but in a context of declining ARPU, the formula for increasing telco profitability was not clear.

Nokia responded to the call with announcements (some of which are still not public) centered on automating cloud native networks, embracing a multi-cloud reality, and augmenting network resilience, all within a vision in which operators transit tokens and core network functions use AI to predict the future and manage themselves.

AI, automation, and trust were key themes. But less clear was the formula to meaningfully increase telco profitability in a world of declining ARPU. High-profile telco customers discussed concepts such as AI-enabled voice, monetizing network data, commercializing authorization and identity, and exposing APIs for third parties to create their own AI Agents. Nearly every revolutionary idea aiming to push mobile network operators up the value chain was accompanied by the caveat that a complex mosaic of regulatory restrictions was an impediment to meaningful transformation.

Escalating hardware costs was a reality facing every participant in the room, and operators expressed the need to “sweat their assets” by extending life cycles.

Nokia answered the monetization musings by presenting Network as Code, which provides a platform for developers to access network functionalities of major operators via APIs. Nokia VP, Shkumbin Hamiti explained that, after acquiring Rapid’s API hub in 2024, the company fortuitously discovered the Network as Code platform was a perfect place to host MCP-based AI Agent access. Whether this approach will be able to build meaningful telco revenue will depend on the ecosystem of developers and system integrators that Nokia and its customers are able to amass.

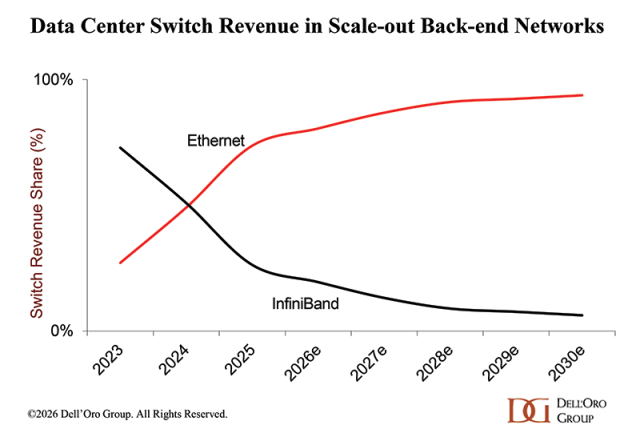

Presentations about AI can sometimes feel theoretical, but there was one harsh reality that everyone in the room was facing: escalating hardware costs caused by the AI infrastructure boom. Service providers indicated they were more open to using the public cloud to host network functions in times of elevated server costs, while Red Hat proposed an approach to using hardware more efficiently. But Nokia did not directly address the pleas by operators to prolong network function life cycles. Of the 89 mobile operators in attendance, those who wish to sweat their hardware assets with Nokia concessions on end of support dates will have to take up the discussion beyond Nokia’s CUG.

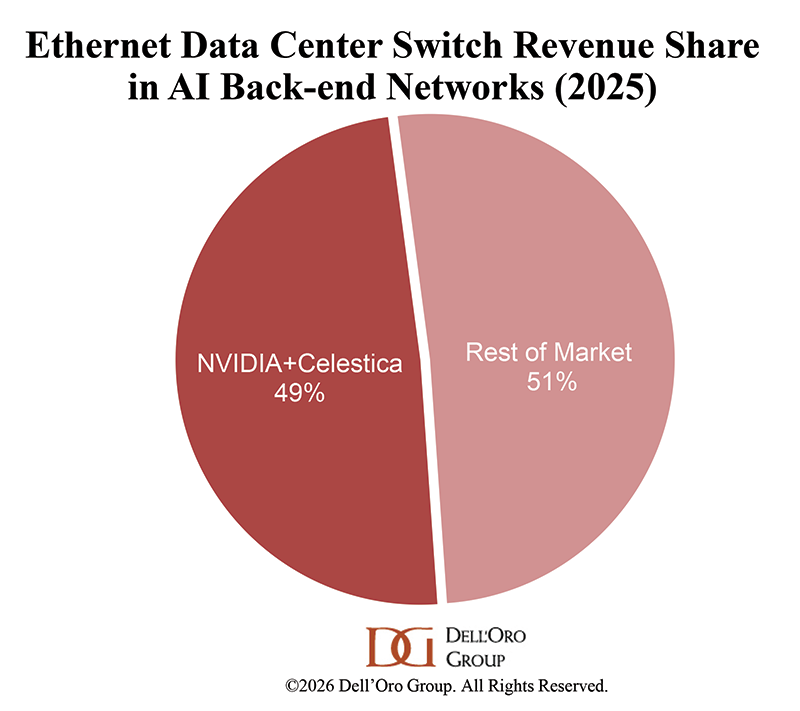

Nokia unveiled a new dimension of the hyped NVIDIA partnership… this one relates to the core network, and not the RAN.

Hardware may be expensive, but Nokia was still able to bring some costly components up to the main stage, announcing an undisclosed dimension of the company’s partnership with NVIDIA. Last October, NVIDIA’s $1 B investment in Nokia seemed to be all about AI and RAN. But Tuesday, Marcelo Madruga, Head of Core Networks’ Technology, revealed that there was a core network aspect too. Dramatically unveiling an edge server from under a blue blanket, Madruga explained it contained, as well as the UPF network function running on Dell, 8 NVIDIA GPUs and the capacity to process a thousand tokens per second. “We’re going to create the AI Grid,” he announced, “and we would like you to help us define what it will mean.”

“Should I hand over the keys to my network to AI Agents?”

If token delivery was too far-fetched for some operators struggling with cloud native, there were plenty of other near-term roadmaps, both hardware and software, to keep their attention. Breakout rooms contained lively presentations and discussions about both Nokia core network solutions and partner offers, with sessions by AMD, AWS, Dell, Google, Intel, Everpure, and Red Hat. NVIDIA was notably absent.

From the plenary sessions to the breakout rooms, to the informal discussions over lunch, there was a general sense that AI would soon play a pivotal role in resolving the complexity of the cloud native core network. Automation Strategy VP, Deepa Ramachandran, asked facetiously, “Should I hand over the keys to my network to AI Agents?” and instead proposed that operators get agents to do the heavy lifting, gathering logs and doing root cause analyses. Indeed, the operator that had a workforce consumed by managing the infrastructure instead of developing new services, announced it aims to rebalance the effort by increasing automation. And as for the monetization problem? Said one major telco: “with 5G Advanced, the best is yet to come.”