How Cisco Live 2026 framed AI as a catalyst for enterprise-wide infrastructure modernization

At Cisco Live 2026, one message came through clearly: AI infrastructure is no longer just a data center conversation. The next phase of AI adoption will force enterprises to modernize across the full technology stack – from data center fabrics and campus networks to security, identity, observability, and edge operations.

At Cisco Live 2026, the firm’s executives framed Cisco’s opportunity around three outcomes: building AI-ready data centers, enabling future-proof workplaces, and delivering operational resilience. That structure is important because it shifts the discussion away from individual products and toward a broader platform strategy. Cisco is not positioning AI as a single workload or a single refresh cycle. It is positioning AI as a catalyst for infrastructure modernization across nearly every part of enterprise IT.

AI Infrastructure Is Spreading Beyond the Core

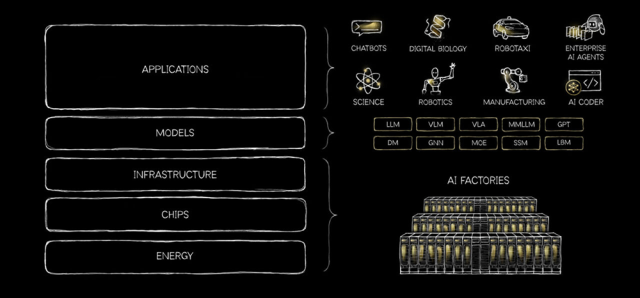

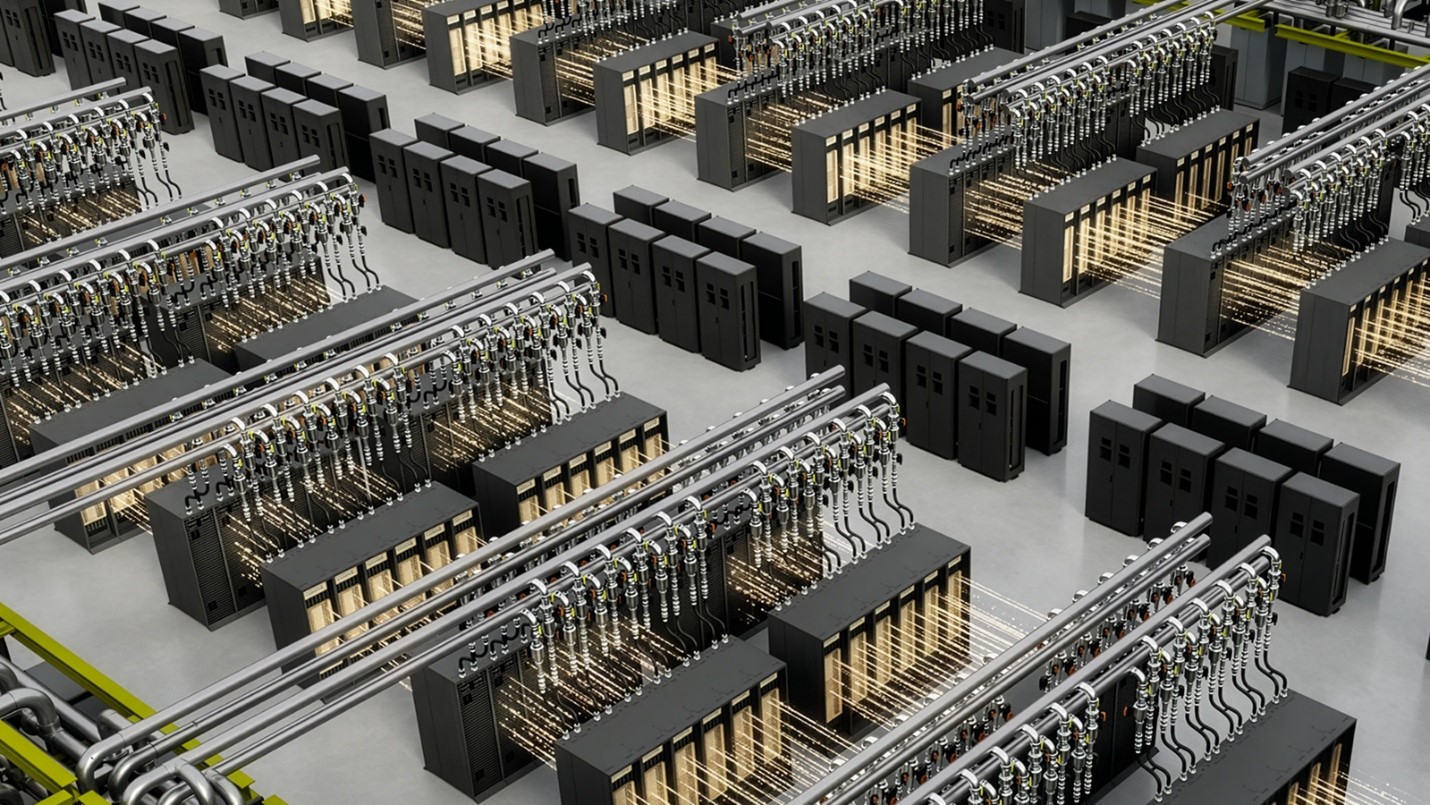

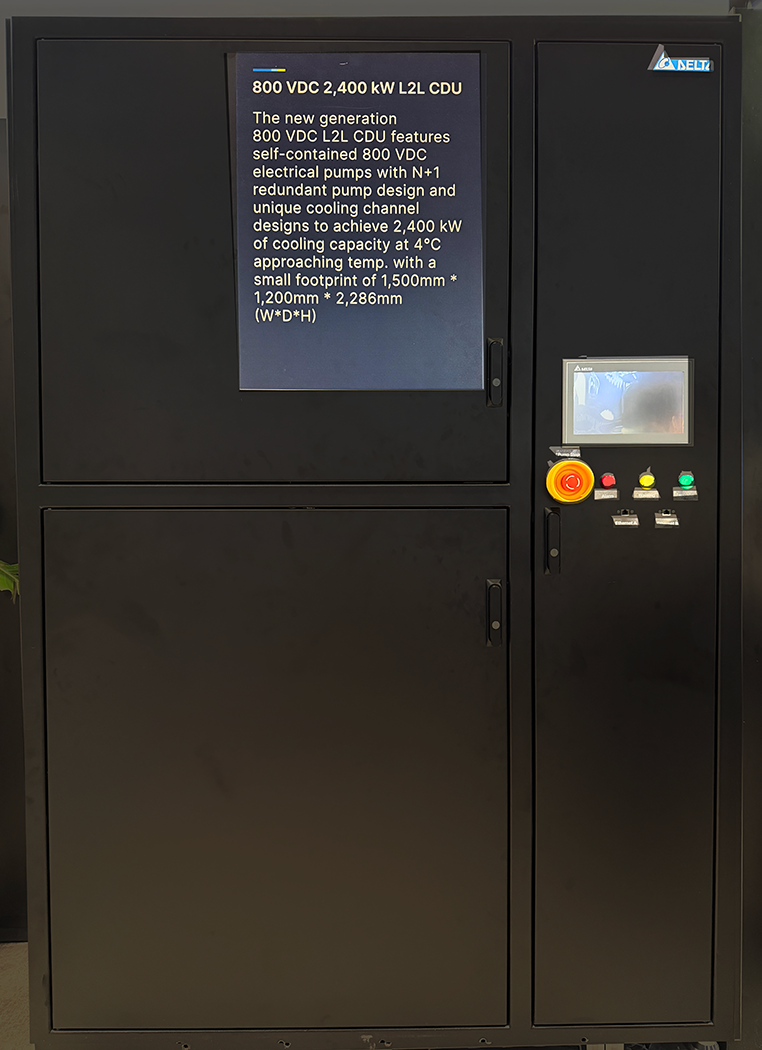

The first major theme was the expansion of AI demand beyond centralized compute environments. The early AI infrastructure discussion has been dominated by GPUs, data center capacity, power, and cooling. Those remain critical constraints. But Cisco’s view is that the next bottlenecks will emerge in the networks and systems that connect users, applications, devices, and AI agents.

That means AI readiness will increasingly extend into the campus, WAN, branch, and edge. As inference moves closer to where data is created and decisions are made, enterprises will need networks that can support higher performance, stronger identity controls, lower latency, and more automated operations.

This creates a natural modernization driver. The gap between today’s enterprise infrastructure and what AI-enabled operations will require is becoming clearer. Refresh cycles such as the Catalyst 9000 upgrade path are not just about replacing aging hardware; they are about preparing the enterprise edge for AI workloads, physical AI, stronger security enforcement, and more resilient operations.

Security Becomes an AI Infrastructure Requirement

The second theme was security. Cisco’s senior executives connected traditional infrastructure risk with new AI-specific threats. Known vulnerabilities, unpatched systems, zero-days, and operational complexity remain major challenges. But AI adds another layer: agent behavior, model drift, hallucinations, and non-human identities.

That last point may become one of the most important. As AI agents proliferate, identity is no longer limited to people, devices, and applications. Enterprises will need to govern and secure autonomous or semi-autonomous actors moving across systems. Cisco’s acquisition of Astrix Security fits into this broader need to manage non-human identities as part of the enterprise security model.

The implication is that AI security cannot be treated as a bolt-on. It has to be designed into the infrastructure, the development lifecycle, the observability layer, and the policy framework. This is where Cisco’s platform strategy becomes more relevant. The more AI touches every domain, the harder it becomes for customers to manage risk through disconnected tools.

Observability Must Expand from Infrastructure to AI Workflows

A third theme was observability. Traditional infrastructure monitoring tells enterprises whether the network, applications, and systems are performing as expected. AI introduces a different monitoring problem: are models, agents, and AI workflows behaving as expected?

That is where the Galileo acquisition becomes strategically relevant. By folding Galileo into Splunk Observability, Cisco is extending observability from infrastructure performance into AI workflow behavior, including issues such as hallucination detection and drift analysis.

This represents a meaningful shift. In the cloud era, enterprises needed new tools to secure and monitor cloud-native development. In the AI era, they will need a similar toolchain for AI development, deployment, and runtime governance. Infrastructure observability and AI observability will increasingly need to work together.

Sovereignty and Hybrid Deployment Become Strategic Advantages

Cisco’s senior executives also highlighted growing demand for sovereign and compliance-driven infrastructure. This is not just a regional issue. As governments and regulated industries place more emphasis on where data resides, how infrastructure is controlled, and who manages critical systems, sovereign cloud requirements are likely to influence a larger share of future technology spending.

Cisco’s advantage here is breadth. Its portfolio spans networking, security, observability, compute partnerships, and hybrid deployment models. That matters because sovereign strategies rarely fit neatly into one architecture. Some customers will need on-premises control. Others will need hybrid models. Others will want cloud-like operations with regional compliance.

This plays into Cisco’s broader positioning: global reach, local partner presence, and the ability to support customers across different operating models.

The Platform Is the Strategy

The common thread across Cisco Live was platform integration. Cisco Cloud Control was positioned as an umbrella management and policy layer across the portfolio. The goal is to reduce operational burden, accelerate time to value, and give customers a more integrated experience than they would get from managing multiple point products.

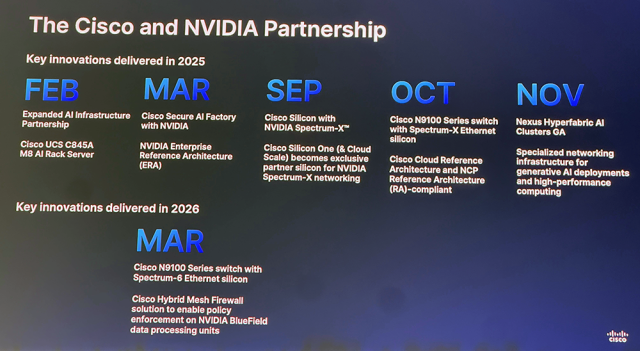

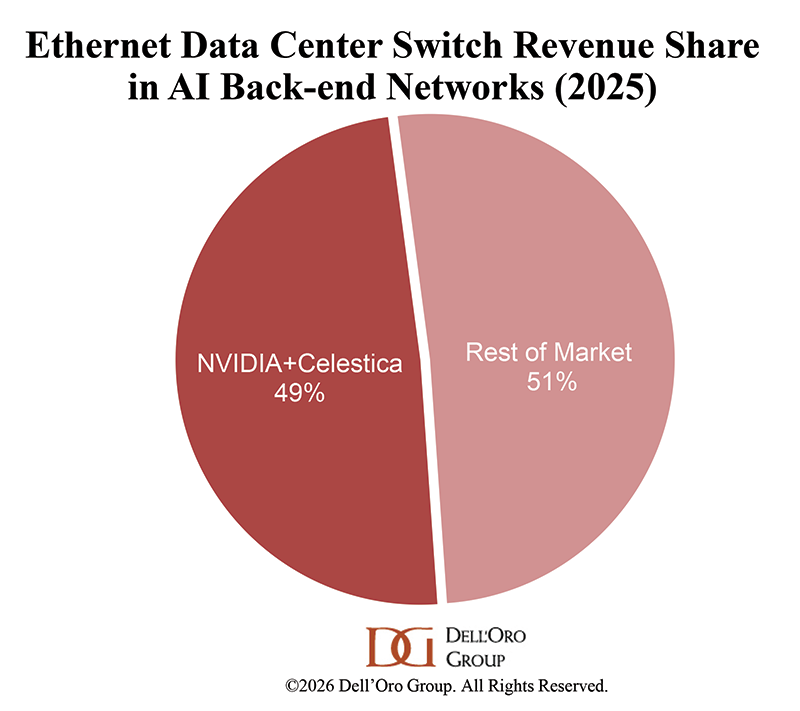

That does not mean Cisco is pursuing a closed strategy. Cisco’s senior executives emphasized open standards, APIs, ecosystem partnerships, and customer choice. The NVIDIA partnership is a good example. Cisco is working with NVIDIA on AI accelerators and Spectrum technology integration, while also signaling support for multiple AI accelerator partners across training and inference.

This balance matters. AI infrastructure will not be built by one vendor alone. But customers will still want simplified operations, unified management, and accountability across increasingly complex environments. Cisco’s bet is that openness and platform integration can coexist.

Net-Net

The most important takeaway from Cisco Live 2026 is that Cisco is using AI to reframe infrastructure modernization. The story is not only about AI-ready data centers. It is about AI-ready enterprises.

That means modernizing the data center, campus, WAN, edge, identity layer, security architecture, and observability stack together. It also means preparing for a world where AI agents become operational actors, sovereign requirements shape buying decisions, and resilience becomes a board-level priority.

Cisco’s strategy appears to be moving in a clear direction: use AI as the demand driver, platforms as the operating model, partnerships as the ecosystem lever, and security as the connective tissue. The winners in this next phase of infrastructure will not simply be the vendors with the best hardware. They will be the vendors that help customers turn complexity into an integrated, resilient, and AI-ready operating environment.