Below are some of the MWC takeaways related to end-user drivers, AI RAN, 6G, and Open RAN.

It is all about the 0.06%

With mobile data traffic slowing and multiple data points suggesting the mobile network has significant excess capacity, the focus is shifting toward uplink (UL) traffic growth (Ericsson estimates it could grow by 3x every five years), network differentiation, and the RAN investment requirements needed to dimension networks for the AI era.

Impressive demos featuring robots serving drinks, busy concerts, or the ability to look up information using smart glasses were less interesting than the 0.06%—a number that every investor, financial analyst, and 6G skeptic now seems to have memorized. Against a backdrop of low network utilization, this figure became one of the key focal points of the show.

For those who did not read Ericsson’s June 2025 Mobility Report, 0.06% is the share of total network traffic originating from GenAI. The concern is that while AI is proliferating rapidly, its impact on the mobile network—for now—is negligible.

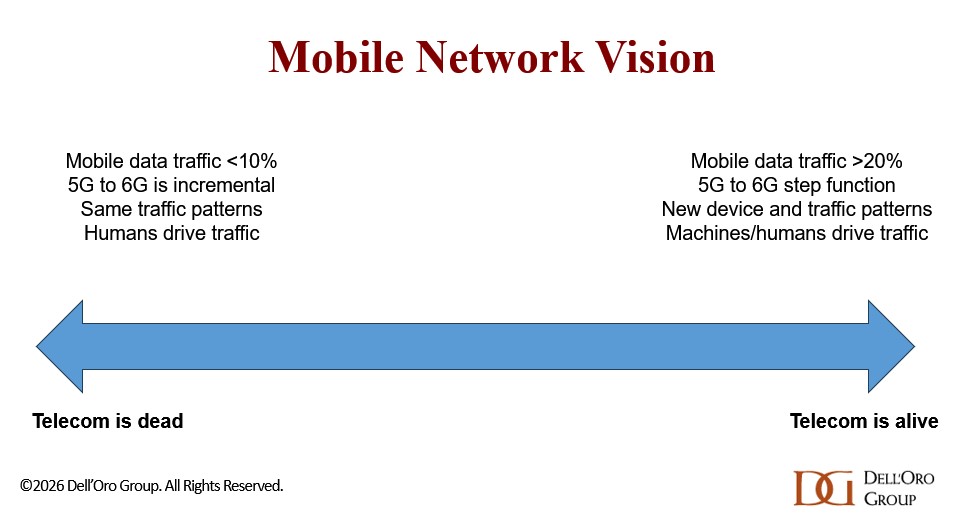

Two different mobile network visions

As we have discussed in various reports and blogs, we believe there are, at a high level, two very different mobile network visions evolving.

The “telecom is dead” narrative is largely driven by the mobile data traffic growth trajectory. The key argument is that humans can only consume so much smartphone video in a day; therefore, mobile data traffic growth will soon plateau, reducing the need for new 6G spectrum.

The “telecom is alive” vision is built on the assumption that it is still early days in the AI era. Even with GenAI accounting for just 0.06% of total traffic, this camp is more optimistic about both human-driven and machine-driven traffic. On the human side, the expectation is that new devices—better suited for environments where data is continuously recorded, analyzed, and uploaded—will emerge and significantly change mobile traffic patterns. Smart glasses are a strong contender, though they are not ready yet.

Machines and Physical AI are also expected to have a profound impact on everyday life for both consumers and enterprises. Fixed networks/Wi-Fi will play an important role, but they will need to be complemented by high-performance cellular connectivity.

These two very different visions are important, as they will shape how operators approach capex, architectural shifts, the timing of 6G, and the need for AI RAN, among other things.

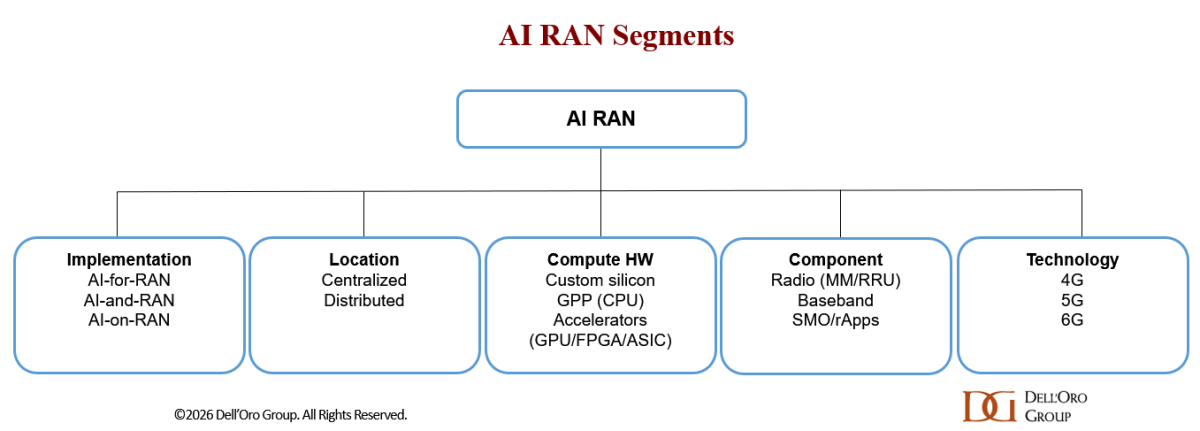

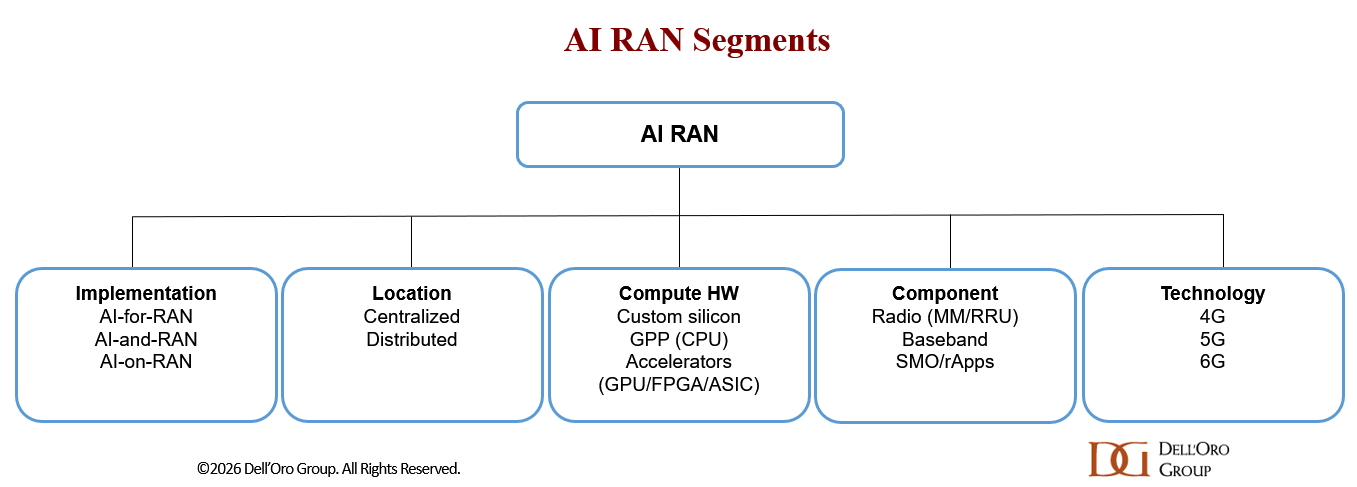

All roads lead to AI RAN

MWC reinforced what we have already communicated:

- AI RAN is happening (across all RAN layers)

- It will play a major role in the second half of 5G and from the outset with 6G

- The base case is that AI-for-RAN will dominate over the forecast period

- The GPU RAN conversation is evolving

Operators are no longer asking why GPUs might be relevant, but rather where and when they make sense. Expectations for GPU RAN are still modest, but the topic has clearly moved out of the noise.

Please see the recently published AI RAN blog for more details.

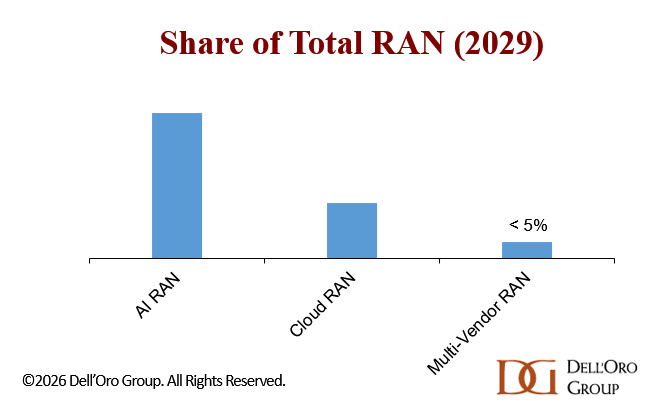

Open RAN/vRAN takes a backseat, but remains important

AI RAN has moved into the passenger seat, while Open RAN/vRAN/Cloud RAN was clearly pushed to the backseat at this year’s MWC. Still, even with reduced Open RAN marketing, most conversations and demos support our broader message:

- Open FH is increasingly being specified as a baseline capability for next-generation RAN platforms. Ericsson plans to have 160 Open-RAN-proven radios by the end of 2026. Similarly, Nokia’s recently introduced AI-RAN-ready Doksuri radios include compatibility with Open FH standards. Samsung, 1Finity, and NEC are already strong proponents of Open RAN/vRAN.

- Supplier diversity is not improving. RAN market concentration continues to increase, and Open RAN is most often deployed in single-vendor configurations.

- Multi-vendor Open RAN remains rare, although one European Tier-1 operator believes our forecast may be too pessimistic.

- Vendor strategies are shifting. Both Mavenir and NEC have recently revised their RAN strategies. Mavenir is focusing more on small cells and non-terrestrial networks (NTN), while NEC is prioritizing vRAN and Massive MIMO.

- The long-term outlook remains positive, though forecasts were revised downward in the January update.

- It is still not guaranteed that Open RAN will become part of the 3GPP standards.

Please see the recently published Open RAN blog for more details.

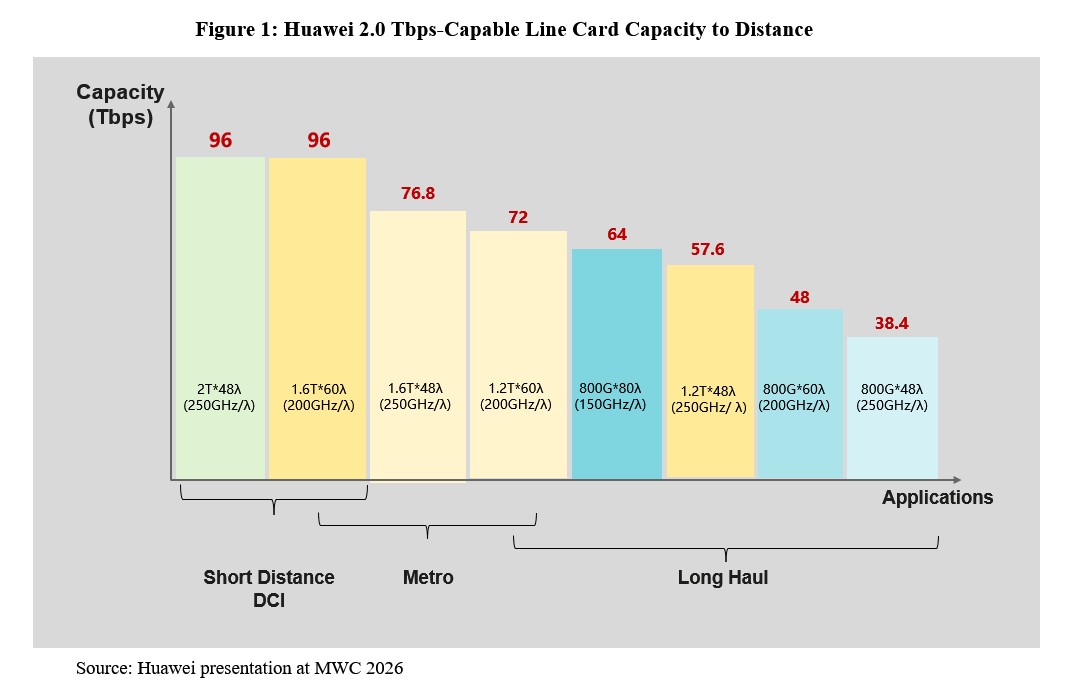

Massive MIMO – upside with 6G and FDD

Massive MIMO has been a major success. In addition to capacity gains, operators have used Massive MIMO to improve range and minimize incremental cell-site investments. We estimate that these higher-MIMO configurations accounted for roughly half of 5G RAN revenues between 2018 and 2025.

With upper mid-band now covering more than 55% of the global population (per the Ericsson Mobility Report), growth will become more challenging. So far, the focus has been on upper mid-band TDD, while FDD and 6.4 GHz+ remain largely untapped.

FDD Massive MIMO is gaining attention because:

- UL traffic is now growing faster than DL

- Technology improvements are helping reduce size and improve form factors

Because of the lower carrier frequencies, size remains a challenge in FDD spectrum. Huawei marketed its 28 kg 1.8+2.1 GHz FDD Massive MIMO unit as the industry’s lightest.

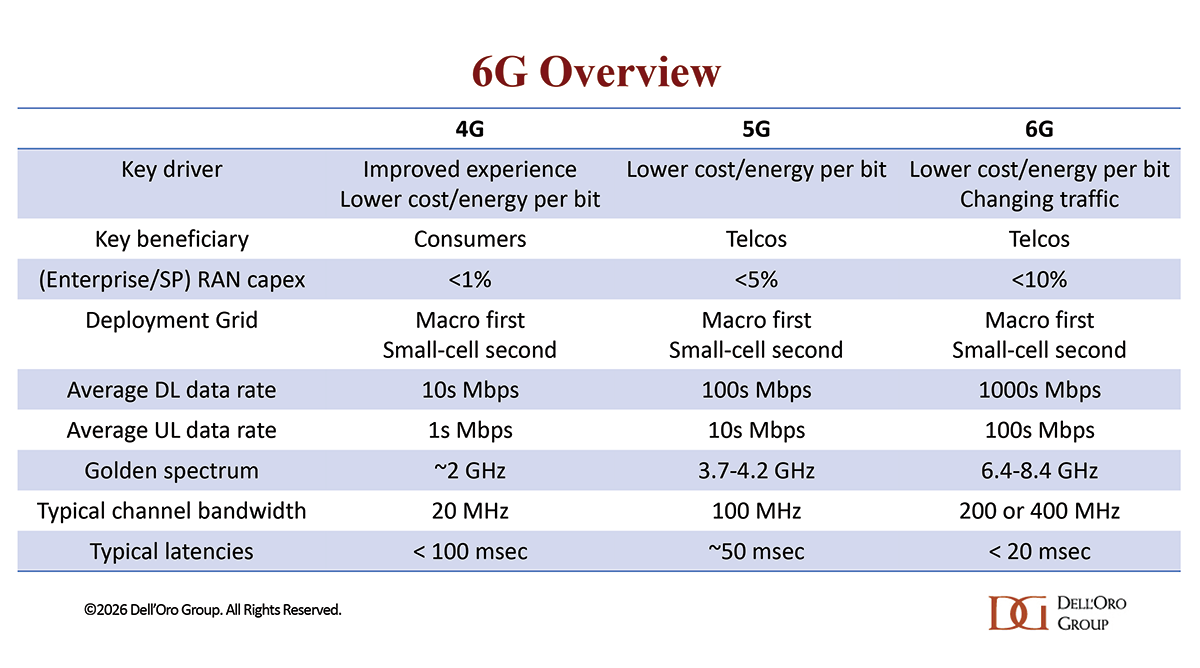

6G – no change to consensus outlook

- 6G was not as prominent as AI RAN, but the discussions and demos we saw reinforce our existing view:

- 6G is now inevitable—focus has shifted from if to how and when

- Technology ramp is expected around 2029/2030

- 4–8.4 GHz is emerging as the “golden spectrum”

- The existing macro grid will provide the foundation, with Massive MIMO playing a key role

- A more optimistic network vision is improving sentiment—6G is not just about capacity, but also about changing traffic patterns as machines account for a larger share of total traffic

- AI-native architecture will be central